TikTok, one of the most popular social media platforms among teenagers, is under scrutiny for its role in exacerbating eating disorders among young people in the UK. With tailored algorithms and an ever-changing feed of appearance-orientated content, experts are now asking: is the app encouraging harmful behaviours under the guise of fitness and lifestyle content?

Eating Disorders on the Rise

- Beat Eating Disorders estimate 1.25 million people in the UK are currently suffering from an eating disorder, with anorexia having the highest mortality rate of any psychiatric disorder

- The NHS UK recorded that the possibility of eating problems among young people, aged 17-19 years, rose from 44.6% in 2017, to 58.2% in 2021

- The National Institute for Health and Care Excellence reported that between 2015/16 and 2020/21, hospital admissions in England for eating disorders increased by 84%

The UK Government warns that “eating disorders are serious mental health conditions with potentially devastating psychological, physical, and social consequences.” These disorders can range from eating too much to eating far too little, often rooted in a deeply distorted body image.

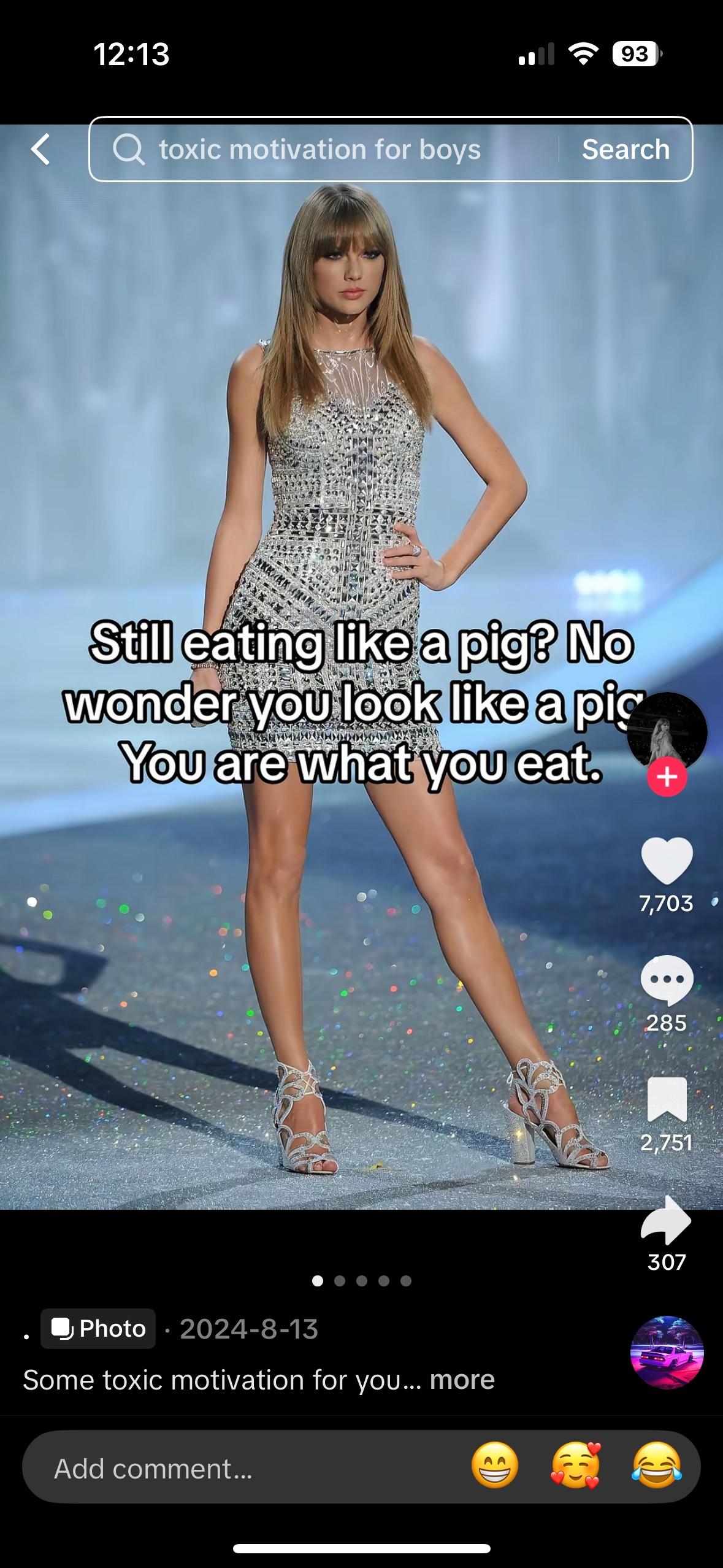

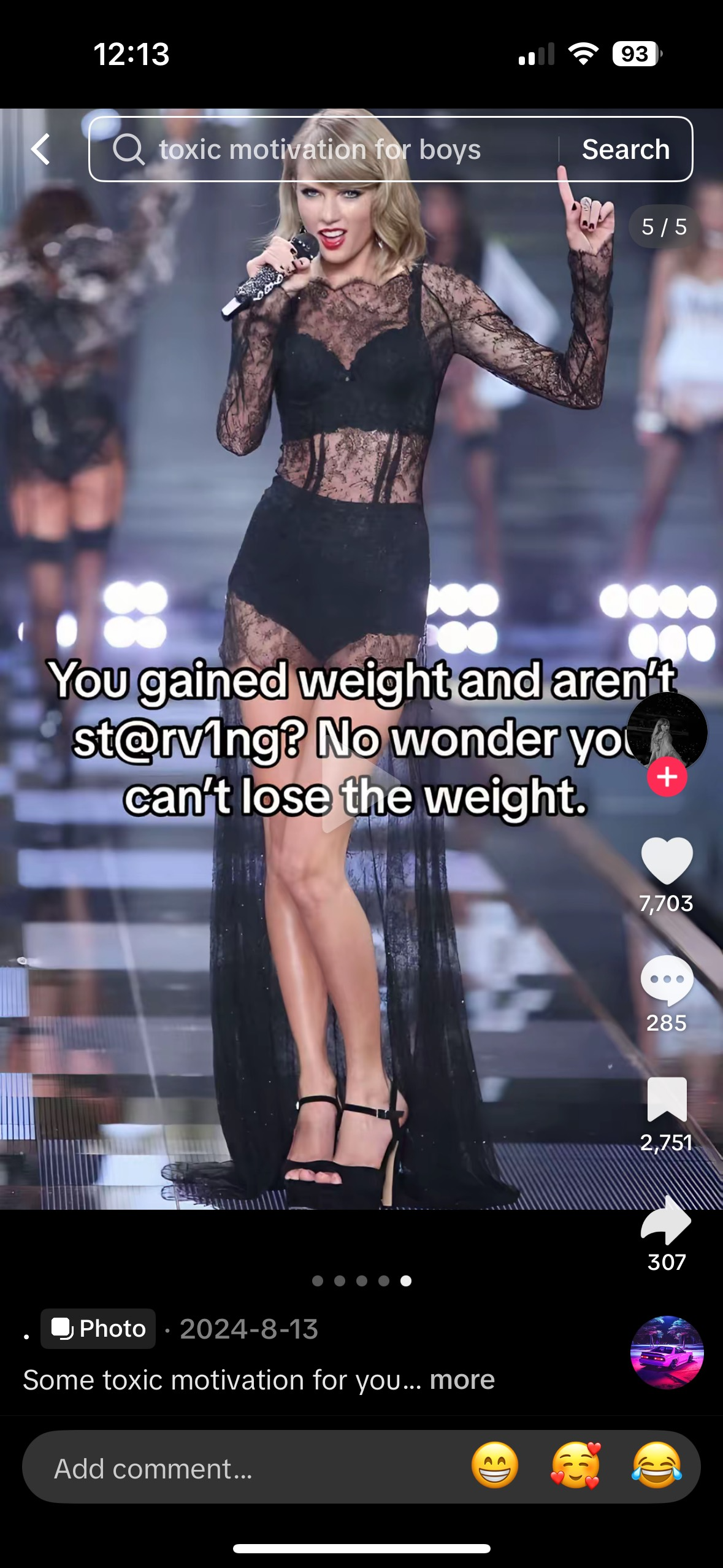

Now, experts are sounding the alarm on social media platforms like TikTok, accusing them of fuelling unhealthy eating habits and reinforcing toxic body standards among young people.

TikTok: A Platform for Toxicity?

Launched globally in 2017 by Chinese tech giant ByteDance, TikTok has exploded into the fifth most popular social media platform, boasting around 2 billion users worldwide.

Known for its addictive short-form videos and viral trends, the app now sees over a billion videos viewed daily – despite being banned in countries like India. Its meteoric rise reflects a seismic shift in how Gen Z consumes content: fast, mobile, and endlessly scrollable.

As one of the most popular platforms among teenagers, TikTok influences the way young people understand themselves and the world around them.

Inside the Algorithm

TikTok’s algorithm uses data from user interactions – such as watch time, likes, and shares – along with video details like hashtags and sounds to serve highly personalised content.

Because the algorithm prioritises engagement above all, it can quickly create what we call an echo chamber. Experts warn this can be harmful, especially for young users, as it may quickly promote extreme content, including videos linked to disordered eating habits.

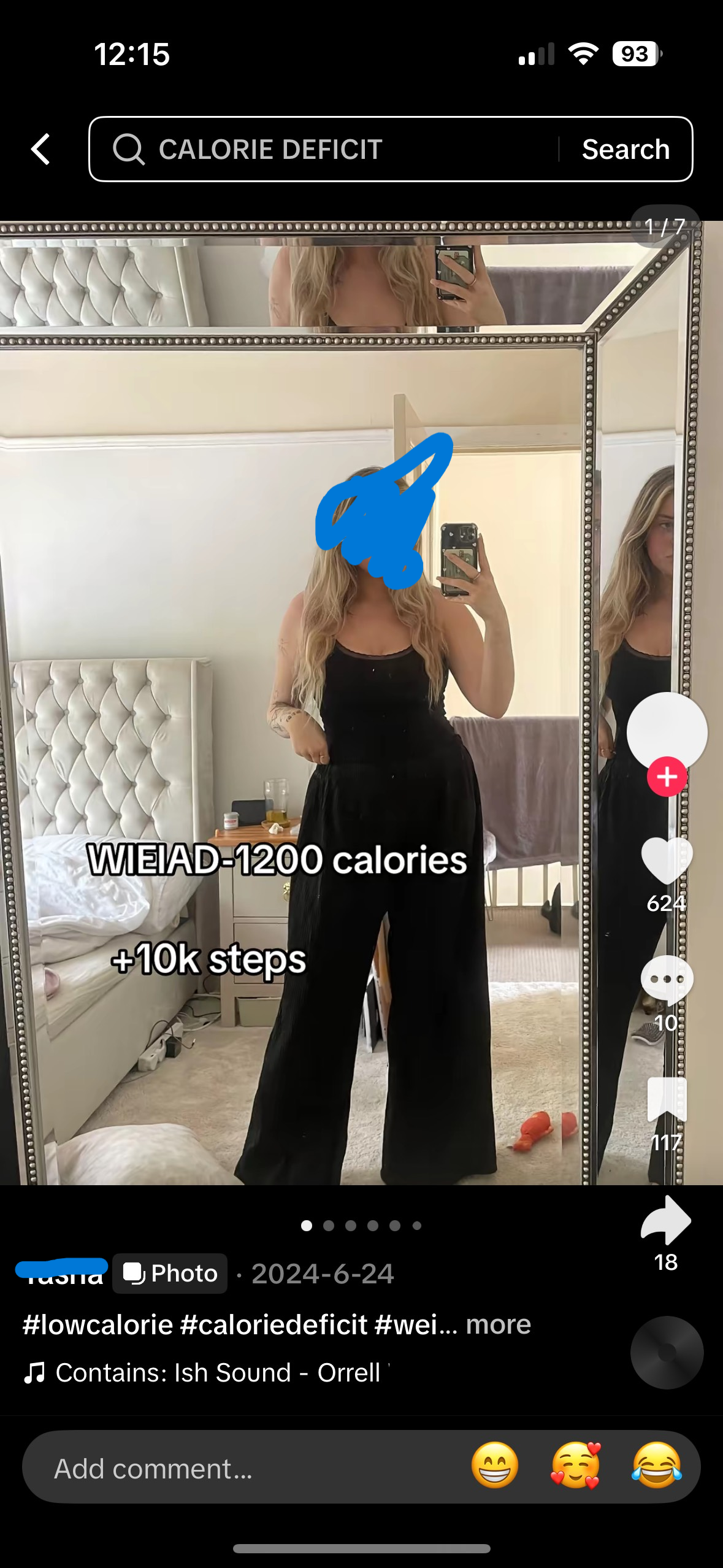

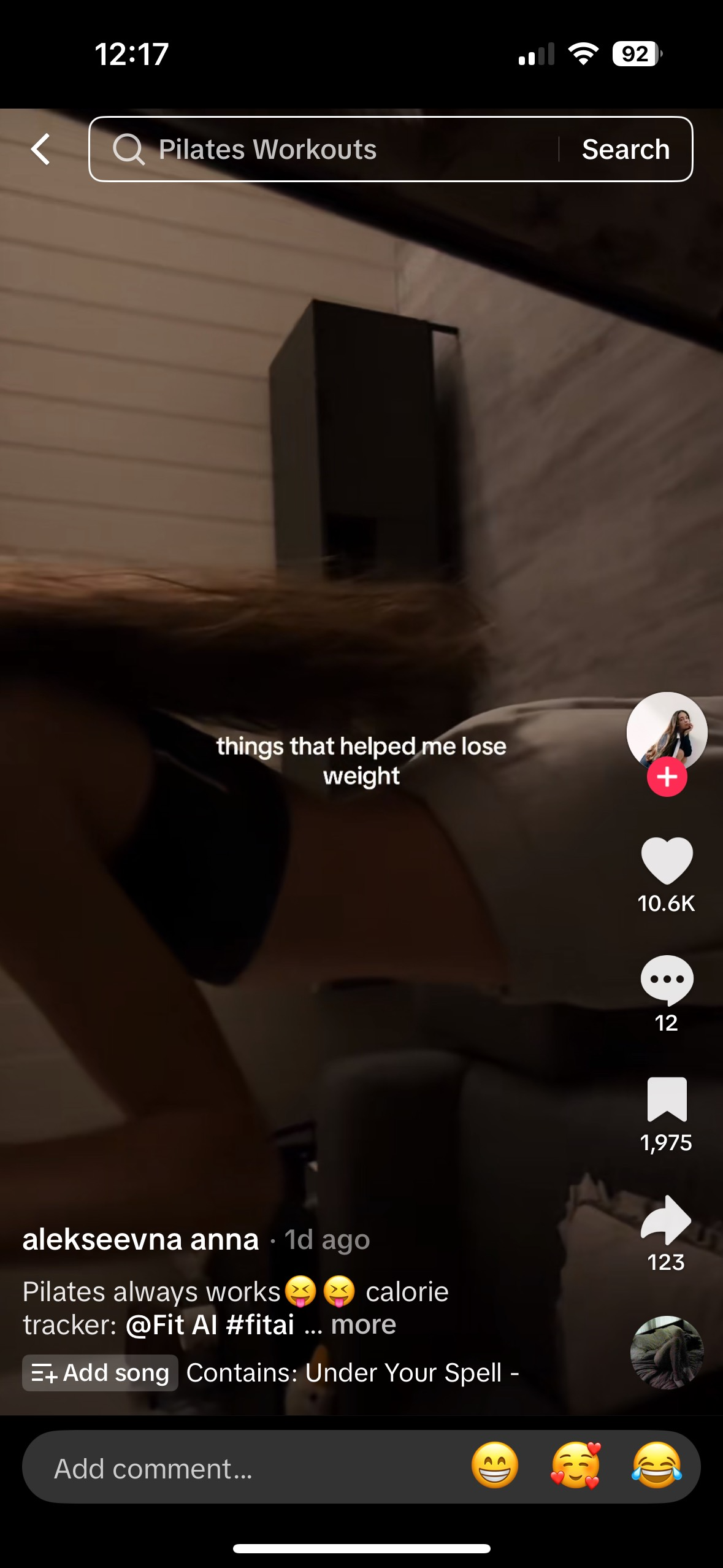

For example, a teen who watches a few weight-loss videos might soon be flooded with dieting or eating disorder-related content reinforcing harmful behaviour patterns.

What Do the Stats Say?

A 2024 Science Direct UK study of over a million videos found users with EDs were shown over 4,000% more toxic content than healthy users – including pro anorexia (‘pro-ana’), extreme dieting, and appearance-obsessed videos. Even just liking a diet video led to a 335% spike in similar content on users’ feeds. Worryingly, 79% of those shown over 1,000 harmful videos per month had an eating disorder.

Despite TikTok’s claims of moderation, teens continue to find ‘thinspiration’ and ‘fitspiration’ content through altered hashtags. Experts warn this relentless stream of idealised body imagery and disordered advice not only triggers but sustains ED behaviours, especially among young users. And with TikTok now the top social media app for teens in ED treatment, critics say its algorithm isn’t just reflecting trends – its reinforcing illness.

A 21-year-old from Cambridge, who was diagnosed with anorexia at just 13, spoke anonymously about TikTok’s damaging impact on her recovery. She described the platform’s algorithm as “lethal”, saying her For You Page was flooded with “triggering content” – including pro-anorexia imagery, unrealistic body standards, and extreme daily food intakes. She quoted: “one of the reasons I have not been able to fully recover is because TikTok keeps showing me fitness content and eating disorder posts. It never really stops.”

Why TikTok Stands Out

TikTok’s algorithm is significantly more aggressive and personalised than those of platforms like Instagram or Facebook — and this makes it particularly impactful, and potentially dangerous, when it comes to eating disorder content.

For example, TikTok’s algorithm rapidly adapts to user interactions, pushing highly personalised content from strangers rather than just accounts users follow.

Unlike Instagram or YouTube, where harmful content usually requires active searching, TikTok passively delivers such videos based on inferred interest. Its fast-paced, short-form videos often disguise disordered messages as lifestyle or wellness trends, making them easy to consume repeatedly. With content driven almost entirely by the algorithm rather than community, harmful trends canspread quickly and widely with little moderation.

Eating disorder campaigner James Downs warned that TikTok’s lack of transparency makes it especially dangerous: “We’d never send young people into unsafe physical spaces—so why accept risks in their digital ones?” he said, adding that users have no control over what potentially harmful content they might see.

How have TikTok responded?

TikTok maintains that user safety is its highest priority and encourages users to report any content that promotes or glorifies eating disorders, which breaches its community guidelines.

While eating disorder charity Beat has welcomed these measures, it cautions that harmful videos still manage to slip through the platform’s moderation.

The core challenge lies in TikTok’s powerful, engagement-driven algorithm – experts and campaigners warn it can inadvertently funnel vulnerable users toward increasingly extreme and damaging fitness and diet content.

This raises serious questions about the effectiveness of current safeguards and whether the platform can truly prevent the spread of harmful material without fundamentally changing how its algorithm operates.

As concerns mount over the platform’s impact on young people’s mental health, calls for greater transparency, stricter regulation, and more proactive moderation are growing louder – underscoring the urgent need for social media companies to take stronger responsibility for the real-world consequences of their technology.

Daisy Reed

Daisy Reed